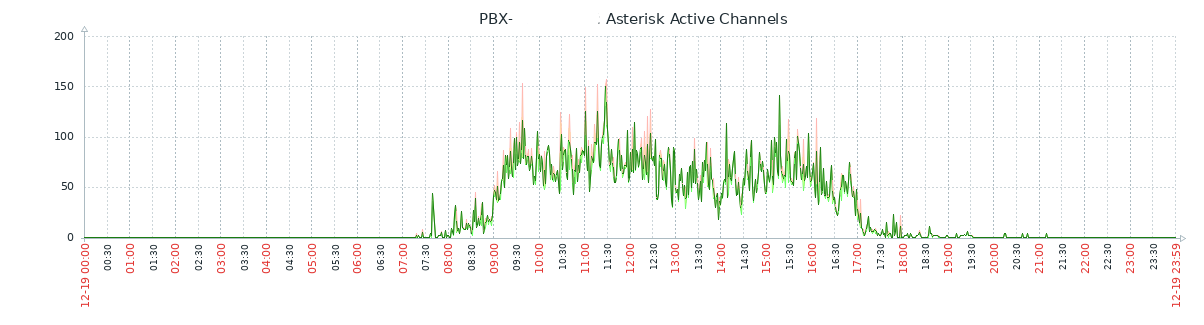

In the last post I migrated a Grafana database to a new server and took the opportunity to upgrade the Grafana version in the process.

This created a new issue, since Grafana’s supported browser list moves with the times, my two “NOC” screens consisting each of a Raspberry Pi 3A running Raspbian Buster and Hyperpixel 4.0 screens no longer worked. Instead, the outdated Chromium browsers now displayed the “If you’re seeing this Grafana has failed to load its application files” page when visiting the Grafana web interface.

Bringing the OS on the Pi’s up to date is the logical resolution, however, there are a couple of issues with this:

- One of the Hyperpixel 4.0 screens is an early revision model, meaning the official and legacy drivers do not work.

- Even with working screen drivers, compatible browsers will not load on the 512MB RAM available to the Pi 3A.

Not wanting to obsolete a pair of Pi 3A’s with a now unique form factor, instead a workaround was found to continue displaying Grafana dashboards on this older hardware. The solution even gave a slight benefit over the original URL…